Focused ambitions

Focused ambitions is part of the Look back feature from the Spring 2023 issue of Michigan Engineer magazine.

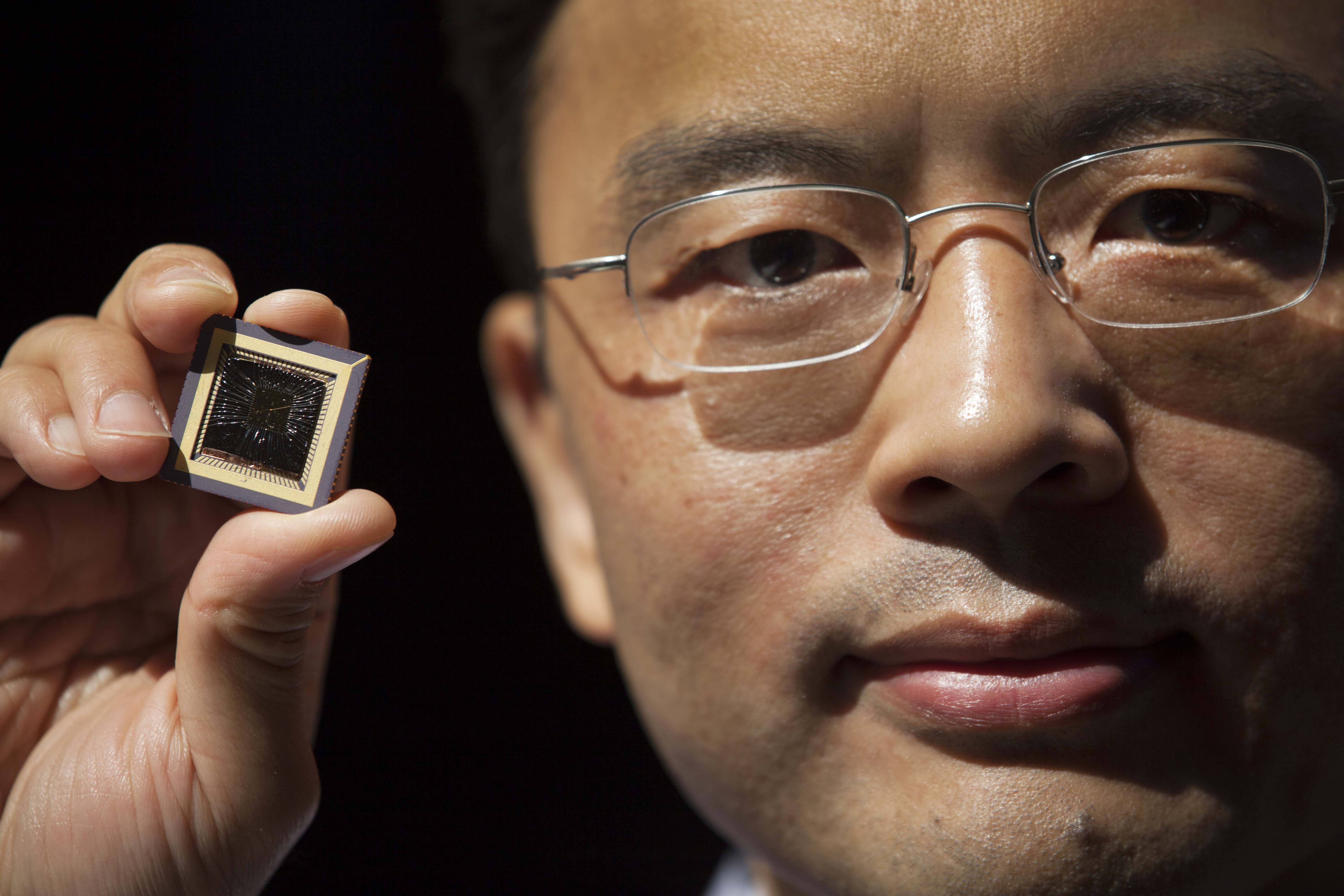

In the article “Designing Intelligence,” published in the spring 2015 issue, John Laird, now a professor emeritus of electrical engineering and computer science, represented the “symbols-based” approach to artificial intelligence, which directly trains AI systems on how to accomplish tasks. Meanwhile, Wei Lu, a professor of electrical engineering and computer science, represented the hardware side of the neural network approach, which basically provides a system with annotated data and expects it to figure out connections for itself.

Interest in AI is still very high, although some of the emphasis has changed. Since 2015, neural network and machine learning approaches to artificial intelligence have grown enormously in terms of both funding and influence.

Changes at U-M reflect this. Neural networks have been applied by researchers around the world and around the College—not just for classic AI problems such as robot motion and the manipulation of objects, but also for answering scientific and medical questions.

“As neural networks are getting better and more popular, people want so-called explainable AI. They actually want to understand how the neural network works,” said Lu.

Understanding machine learning models serves two important purposes: It enables researchers to draw scientific insights from models, and it also makes the model more trustworthy because its decisions can be understood. Indeed, researchers are increasingly designing models that reveal the connections they find. Two such models developed at U-M suggest how to tune a catalyst so that it is better at driving a chemical reaction and unveil the dynamics among microbial communities in the human gut.

To Lu, this approach represents the best of both worlds, not limited by the knowledge and experience of human experts as symbolic models are, but also building human knowledge rather than allowing models to remain inscrutable.

All this interest in machine learning models means that they attract a lot of the AI funding. But rather than trying to build a general intelligence that is capable of learning as flexibly as human minds, projects tend to focus on solving narrow problems. For instance, chatbots give the illusion of intelligence, yet they’re fundamentally word prediction algorithms. They sometimes give accurate information, but frequently string together sentences of plausible-sounding nonsense.

As neural networks are getting better and more popular, people want so-called explainable AI. They actually want to understand how the neural network works.

Wei Lu

Over the same span, Lu points out that the automotive industry has reined in its ambitions from full autonomy in the near term to becoming more reliable at simpler problems like active lane following, which largely keeps the vehicle on track but still needs human attention.

As neural networks remain key to the limited AI proliferating across our devices, Lu’s memristor technology has found its niche with a startup called MemryX. Machine learning models developed in the cloud often need to be simplified to run efficiently on processors in “edge” devices such as phones and automobiles.

“What drives us is we want to ensure that a user will get the same performance and accuracy when they deploy the model at the edge as they do in model training in the cloud,” said Lu.

MemryX has been the recipient of significant interest and buzz in the last year because its chips with neuron-like connections are capable of efficiently running the full models. So while hype about conscious machines and fully autonomous cars remains overblown, you can expect to continue seeing AI slowly expanding into new areas of our lives, solving narrow problems more and more reliably.

MENU

MENU